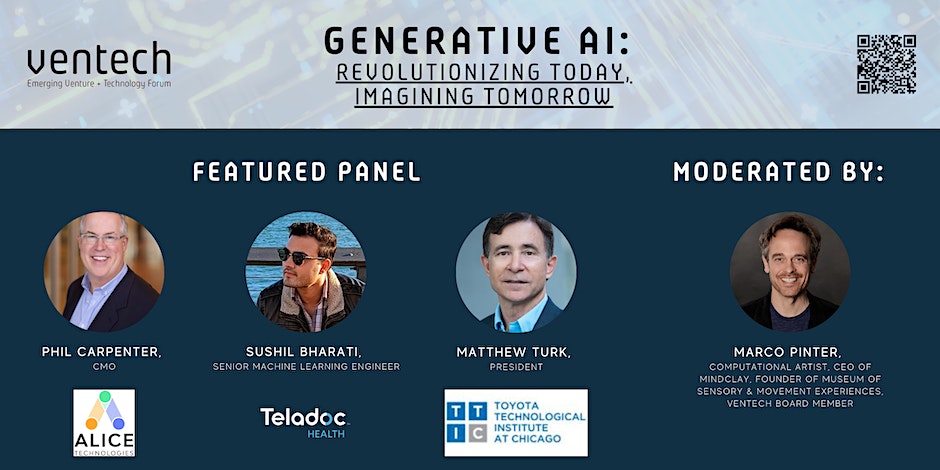

Delve deep into the transformative world of Generative AI in this enlightening panel discussion. Spanning the rich tapestry of academia to real-world applications in national and local industries, our distinguished panelists will shed light on current practices, novel innovations, and the future horizons of Generative AI. From the integration of this groundbreaking technology in sectors like healthcare and construction to its evolving academic research, join us for a comprehensive journey into the heart of AI's generative revolution. Witness firsthand the synthesis of theoretical insights with hands-on expertise, as we explore what the future holds for this dynamic field.

Abstract

This project aims to transform the nano-scale of a striking biological phenomenon, the relationship between SARS-CoV-2 coronavirus and human molecules, into an interactive audiovisual simulation. In this work, the interaction data between the spike protein of SARS-CoV-2 and human cellular proteins is measured by Atomic Force Microscopy, which can touch and image a single molecule. We are creating an interactive audiovisual installation and performance from a set of interaction data.

Co-authors of the paper are Yoojin Oh, senior scientist at the Institute of Biophysics, Johannes Kepler University Linz, and JoAnn Kurchera-Morin, Director and Chief Scientist of the AlloSphere Research Facility and professor of Media Arts and Technology and Music at the University of California Santa Barbara.

Abstract

Dynamic Theater explores the use of augmented reality (AR) in immersive theater as a platform for digital dance performances. The project presents a locomotion-based experience that allows for full spatial exploration. A large indoor AR theater space was designed to allow users to freely explore the augmented environment. The curated wide-area experience employs various guidance mechanisms to direct users to the main content zones. Results from our 20-person user study show how users experience the performance piece while using a guidance system. The importance of stage layout, guidance system, and dancer placement in immersive theater experiences are highlighted as they cater to user preferences while enhancing the overall reception of digital content in wide-area AR. Observations after working with dancers and choreographers, as well as their experience and feedback are also discussed. Co-authors are Joshua Lu, and Tobias Höllerer.

Reality Distortion Room: A Study of User Locomotion Responses to Spatial Augmented Reality Effects

Abstract

Reality Distortion Room (RDR) is a proof-of-concept augmented reality system using projection mapping and unencumbered interaction with the Microsoft RoomAlive system to study a user’s locomotive response to visual effects that seemingly transform the physical room the user is in. This study presents five effects that augment the appearance of a physical room to subtly encourage user motion. Our experiment demonstrates users’ reactions to the different distortion and augmentation effects in a standard living room, with the distortion effects projected as wall grids, furniture holograms, and small particles in the air. The augmented living room can give the impression of becoming elongated, wrapped, shifted, elevated, and enlarged. The study results support the implementation of AR experiences in limited physical spaces by providing an initial understanding of how users can be subtly encouraged to move throughout a room. Co-authors are Andrew D. Wilson, Jennifer Jacobs, and Tobias Höllerer.

Synaptic Time Tunnel, SIGGRAPH 2023.

Sponsored by Autodesk, the Synaptic Time Tunnel was a tribute to 50 years of innovation and achievement in the field of computer graphics and interactive techniques that have been presented at the SIGGRAPH conferences.

An international audience of more than 14,275 attendees from 78 countries enjoyed the conference and its Mobile and Virtual Access component.

Contributors:

Marcos Novak - MAT Chair and transLAB Director, UCSB

Graham Wakefield - York University, UCSB

Haru Ji - York University, UCSB

Nefeli Manoudaki - transLAB, MAT/UCSB

Iason Paterakis - transLAB, MAT/UCSB

Diarmid Flatley - transLAB, MAT/UCSB

Ryan Millet - transLAB, MAT/UCSB

Kon Hyong Kim - AlloSphere Research Group, MAT/UCSB

Gustavo Rincon - AlloSphere Research Group, MAT/UCSB

Weihao Qiu - Experimental Visualization Lab, MAT/UCSB

Pau Rosello Diaz - transLAB, MAT/UCSB

Alan Macy - BIOPAC Systems Inc.

JoAnn Kuchera-Morin - AlloSphere Research Group, MAT/UCSB

Devon Frost - MAT/UCSB

Alysia James - Department of Theater and Dance/UCSB

More information about the Synaptic Time Tunnel can be found in the following news articles:

Forbes.com: SIGGRAPH 2023 Highlights

The MAT alumni that were selected to participate are:

Yoon Chung Han

Solen KIratli

Hannen E. Wolfe

Yin Yu

Weidi Zhang

Rodger (Jieliang) Luo

The International Symposium on Electronic Art is one of the world’s most prominent international arts and technology events, bringing together scholarly, artistic, and scientific domains in an interdisciplinary discussion and showcase of creative productions applying new technologies in art, interactivity, and electronic and digital media.

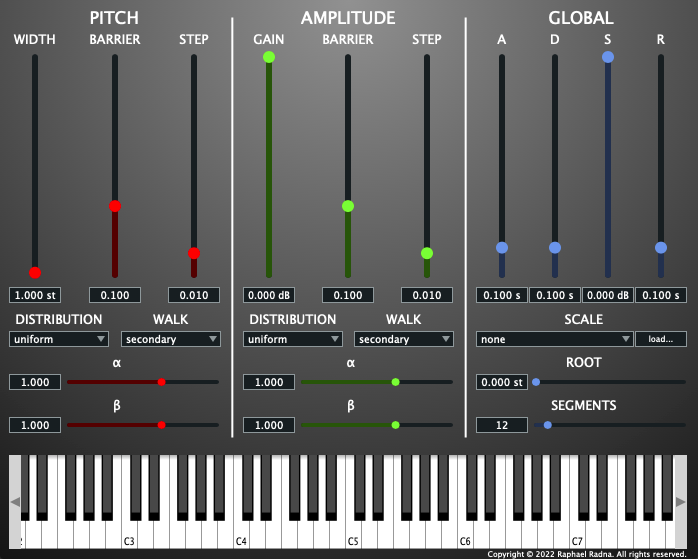

Released in March 2023, Xenos is a virtual instrument plug-in that implements and extends the Dynamic Stochastic Synthesis (DSS) algorithm invented by Iannis Xenakis and notably employed in the 1991 composition GENDY3. DSS produces a wave of variable periodicity through regular stochastic variation of its wave cycle, resulting in emergent pitch and timbral features. While high-level parametric control of the algorithm enables a variety of musical behaviors, composing with DSS is difficult because its parameters lack basis in perceptual qualities.

Xenos thus implements DSS with modifications and extensions that enhance its suitability for general composition. Written in C++ using the JUCE framework, Xenos offers DSS in a convenient, efficient, and widely compatible polyphonic synthesizer that facilitates composition and performance through host-software features, including MIDI input and parameter automation. Xenos also introduces a pitch-quantization feature that tunes each period of the wave to the nearest frequency in an arbitrary scale. Custom scales can be loaded via the Scala tuning standard, enabling both xenharmonic composition at the mesostructural level and investigation of the timbral effects of microtonal pitch sets on the microsound timescale.

A good review of Xenos can be found at Music Radar: www.musicradar.com/news/fantastic-free-synths-xenos.

Xenos GitHub page: github.com/raphaelradna/xenos.

There is also an introductory YouTube video:

Raphael completed his Masters degree from Media Arts and Technology in the Fall of 2022, and is currently pursuing a PhD in Music Composition at UCSB.

Some of the works presented are:

The Impact of Navigation Aids on Search Performance and Object Recall in Wide-Area Augmented Reality (Paper). You-Jin Kim (MAT PhD student) and Radha Kumaran (CS PhD student).

Abstract

Head-worn augmented reality (AR) is a hotly pursued and increasingly feasible contender paradigm for replacing or complementing smartphones and watches for continual information consumption. Here, we compare three different AR navigation aids (on-screen compass, on-screen radar and in-world vertical arrows) in a wide-area outdoor user study (n=24) where participants search for hidden virtual target items amongst physical and virtual objects. We analyzed participants’ search task performance, movements, eye-gaze, survey responses and object recall. There were two key findings. First, all navigational aids enhanced search performance relative to a control condition, with some benefit and strongest user preference for in-world arrows. Second, users recalled fewer physical objects than virtual objects in the environment, suggesting reduced awareness of the physical environment. Together, these findings suggest that while navigational aids presented in AR can enhance search task performance, users may pay less attention to the physical environment, which could have undesirable side-effects.

Comparing Zealous and Restrained AI Recommendations in a Real-World Human-AI Collaboration Task. Chengyuan Xu (MAT PhD student), Kuo-Chin Lien (Appen), Tobias Höllerer (MAT, CS Professor).

Abstract

When designing an AI-assisted decision-making system, there is often a tradeo! between precision and recall in the AI’s recommendations. We argue that careful exploitation of this tradeo! can harness the complementary strengths in the human-AI collaboration to signi"cantly improve team performance. We investigate a real-world video anonymization task for which recall is paramount and more costly to improve. We analyze the performance of 78 professional annotators working with a) no AI assistance, b) a high-precision "restrained" AI, and c) a high-recall "zealous" AI in over 3,466 person-hours of annotation work. In comparison, the zealous AI helps human teammates achieve signi"cantly shorter task completion time and higher recall. In a follow-up study, we remove AI assistance for everyone and "nd negative training e!ects on annotators trained with the restrained AI. These "ndings and our analysis point to important implications for the design of AI assistance in recall-demanding scenarios.

PunchPrint: Creating Composite Fiber-Filament Craft Artifacts by Integrating Punch Needle Embroidery and 3D Printing (Paper). Ashley Del Valle (MAT PhD student), Mert Toka (MAT PhD student), Alejandro Aponte (MAT PhD student), Jennifer Jacobs (MAT Assistant Professor).

Abstract

New printing strategies have enabled 3D-printed materials that imitate traditional textiles. These flament-based textiles are easy to fabricate but lack the look and feel of fber textiles. We seek to augment 3D-printed textiles with needlecraft to produce composite materials that integrate the programmability of additive fabrication with the richness of traditional textile craft. We present PunchPrint: a technique for integrating fber and flament in a textile by com- bining punch needle embroidery and 3D printing. Using a toolpath that imitates textile weave structure, we print a fexible fabric that provides a substrate for punch needle production. We evaluate our material’s robustness through tensile strength and needle compat- ibility tests. We integrate our technique into a parametric design tool and produce functional artifacts that show how PunchPrint broadens punch needle craft by reducing labor in small, detailed artifacts, enabling the integration of openings and multiple yarn weights, and scafolding soft 3D structures.

Fencing Hallucination: An Interactive Installation for Fencing with AI and Synthesizing Chronophotographs. Weihao Qiu (MAT PhD student) and George Legrady (MAT Professor).

Abstract

Fencing Hallucination is a multi-screen interactive installation that enables real-time human-AI interaction in the form of a Fencing game and generates a chronophotograph based on the audience’s movement. It mitigates the conflicts between interactivity, modality variety, and computational limitation in creative AI tools. Fencing Hallucination captures the audience’s pose data as an input to the Multilayer Perceptron (MLP), which generates the virtual AI Fencer’s pose data. It also uses the audience’s pose to synthesize the chronophotograph. The system first represents pose data as stick figures. Then it uses a diffusion model to perform image-to-image translations, converting the stick figures into a series of realistic fencing images. Finally, it combines all images with an additive effect into one image as the result. This multi-step process overcomes the challenge of preserving both the overall motion patterns and fine details when synthesizing a chronophotograph.

Abstract

Complex systems in nature unfold over many spatial and temporal dimensions. Those systems easy for us to perceive as the world around us are limited by what we can see, hear, and interact with. But what about complex systems that we cannot perceive, those systems that exist at the atomic or sub-atomic? Can we bring these systems to human scale and view this data just as we do in viewing real-world phenomena? As a composer working with sound on many spatial temporal dimensions, shape and form comes to life through sound transformation. What seems to be visually imperceptible becomes real and visually perceptible in the composer’s mind. As media artists we can now take these transformational structures from the auditory to the visual and interactive domain through frequency transformation. Can we apply these transformations to complex imperceptible scientific models to see, hear, and interact with these systems bringing them to human scale?

About the SPARKS session:

Our understanding of the world is limited by the capacity of our senses to ingest information and also by our brain’s ability to interpret it. Through the use of technology, we know that the universe we live in is far more complex and rich with information than what can be perceived by humanity. From microscopic to cosmic, information that transcends our lived experiences is difficult to comprehend. Our ability to augment our senses with technology has resulted in an accumulation of vast amounts of data, often in a form that needs to be translated to be understood. This SPARKS session explores the conceptual and creative aspects of scientific visualization.

https://dac.siggraph.org/the-art-of-scientific-visualization-perceiving-the-imperceptible.

DAC SPARKS - The Art of Scientific Visualization: Perceiving the Imperceptible - April 28, 2023

EmissionControl2 is a granular sound synthesizer. The theory of granular synthesis is described in the book Microsound (Curtis Roads, 2001, MIT Press).

Released in October 2020, the new app was developed by a team consisting of Professor Curtis Roads acting as project manager, with software developers Jack Kilgore and Rodney Duplessis. Kilgore is a computer science major at UCSB. Duplessis is a PhD student in music composition at UCSB and is also pursuing a Masters degree in the Media Arts and Technology graduate program.

EmissionControl2 is free and open-source software available at: github.com/jackkilgore/EmissionControl2/releases/latest

The project was supported by a Faculty Research Grant from the UCSB Academic Senate.